“What happened?!”

The year is 2022. It’s May and Elon Musk is trying to squirm his way out of buying Twitter after offering the ridiculous amount of $44 billion. Some people wanted him to get over it and just buy the thing, like he said he would (the people that were about to make serious bank!), while others thought it would be a complete disaster. Turns out it was only a little bit of a disaster. The world didn’t end, but net neutrality kind of did, through the back door. Let me explain…

“Net Neu-what?”

In the early 2010’s the big bad cable companies in the US wanted to throttle the internet, restricting it so only those that could pay top tier would get fast speeds and the rest of us poor people would be stuck with something slightly faster than dialup (who remembers that, lol). Anyway, society gave that idea a big fat slap in the face, and it scurried away back to the dark depths from whence it came. Hurray!!! Or so we thought it did. Turns out, the ghost of John D. Rockefeller had another idea. Instead of restricting access to people to see the internet, they would restrict the reach of people writing on the internet. That way knowledge would be seen and controlled from the people that could pay.

“What are you even talking about!?”

...I hear someone shout, looking up from their doom scrolling, while sat on the toilet. Well, let me continue to explain. In 2022, after Elon X’d Twitter, he started to charge for verification. This turned into payment, not just to "verify" who you were (or who you definitely were not, in many cases), but also payment for your posts to reach more than the number of people familiar with your underwear drawer (about 2?). As soon as Elon did that, Zuck was like, “Me too!”. So Facebook and Instagram, also did the thing.

“But that’s nothing to do with net neutrality..."

OK, just listen! The essence of net neutrality was never about the money. It was the freedom to access all the parts of the internet and about that access being the same for everyone, whoever you were. With social media pay-to-play, the restriction is not on the consumer, it's now on the creator. Those that don’t pay don’t get views, unless their focus is entertainment: catching the attention of viewers within 3 seconds; sticking to that formula of hook - line - sinker; the creator showing their face; making it rugged; sitting in your car...

Not everything should need to be entertainment. But unfortunately, if you just have information or advice that you want to share, and the first 3 people that see it think it’s boring, it dies. The information, that would have been valuable to thousands, never gets through.

“Ok, so this has nothing to do with education, though...”

What does it mean for education? It means that it's harder to find authentic information. It could be out there, but will just rot in solitude due to lack of funds or "entertainment value". For students in school, who shouldn't be using social media anyway, it probably won’t make much difference. But for the teacher doing research for their class prep; the person trying to find the best bargain or review on a product; for the person looking for someone who shares their interest in a niche hobby, the landscape is much more difficult. We have all used social media for learning new things. It has been hard for a while, but now…harder.

“Does it even cost that much?”

Let’s take a closer look at what is on offer.

On X

- $3 doesn’t even get you extra reach! Just some basic features like editing posts

- $8 get’s you 4x visibility

- $40 gives you 15x visibility

On Meta (FB and Insta)

- $15 gives you optimized search result locations and access to the for you areas of people’s feeds.

These are monthly costs which add up, and for many, cost more than they can afford. This is just to share information! Linkedin and TikTok focus on boosters for posts, so is more pay-as-you-go. But their algorithms over the last few years have definitely reduced the number of organic viewers seeing your posts, in favour of people paying for visibility, and many have noticed. You pay nothing, you get nothing.

“But there IS some good in it, right?”

Do the arguments in favour of doing this hold any weight? Spoiler alert: the answer is no! One reason is that using payments eliminates the presence of the bots that plagued many of the sites, but it turns out that this has not worked at all. In fact it is now worse. For many organisations responsible for the plague of bots, the new cost was seen as negligible compared to the profits they made from scamming.

Another reason was that allowing anyone to authenticate was a good thing and gave more people a sense of ownership, increasing user satisfaction. We all know how that went! Remember the random guy that registered and got a gold checkmark for @DisneyJuniorUK (with no affiliation to Disney at all!) and then started posting racial slurs and lies about Disney shows? No? Well, that happened and more! Bad actors who paid for checkmarks just meant that they had more of an image of authenticity to conduct their nefarious acts, which increased their effectiveness. All the system did was prioritise the rich bots and their spam over poor humans with a real message to share.

There is also an argument that it has encouraged people to make more interesting posts. But it seems like the algorithms appeal to our more base instincts, that yearn for low quality rubbish, prioritising negativity and conflict. They don’t call it ‘Doom Scrolling’ for nothing!

“Is this the end?”

So, what do we do about it? I'll save that message for another day. For now, let’s wallow in sadness and pour a sip on the street for what we lost, while we plan our revenge.

Image by Gemini

- Details

- Read Time: 5 mins

Why our education systems are the Wrong Software for Neurodivergent Hardware.

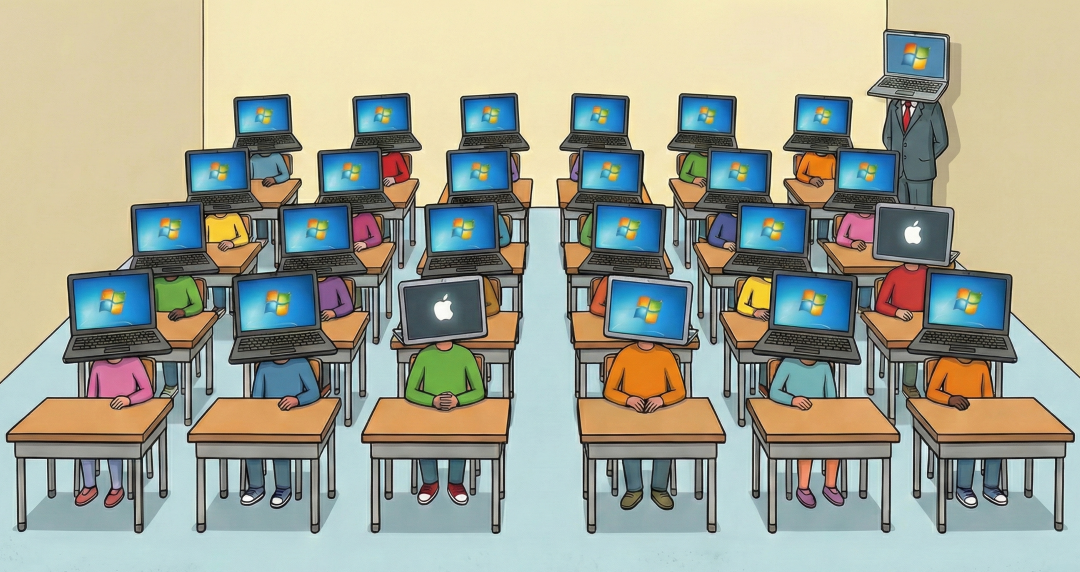

Picture the education system as a piece of software only designed to run on Windows PC students, but 10% of students run on Mac. The education system software doesn’t run natively on Macs so needs to use a virtual machine, special software that pretends to be a Windows PC. It works, but with overheads, like using more RAM, processor and hard disk resources. In this scenario, the Mac is the neurodivergent mind, which needs to adapt to the education system designed for different hardware and in turn takes more effort than others to do the same thing. The perfect solution would be for that software company to make a universal app that works on all systems, natively.

Using the Mac/PC comparison to describe neurodiversity is not my idea, and definitely not new (check out this post and article). But it's a great way to describe how it is. We are still seeing and hearing about ADHD and Dyslexia simply as learning ‘Disabilities’, but another narrative also exists. With roughly 10% of the global population being affected by dyslexia, and with evidence of certain unique abilities, it should really be seen as a natural difference rather than a niche disability. And with large overlaps with ADHD, the same sentiment applies to that too. Let’s look at it differently.

- Details

- Read Time: 8 mins

Read more: Your Neurodivergent Child Isn’t Broken — They’re Just Running MacOS in a Windows World

With nearly a billion registered users globally, AI's reach is undeniable. Yet, if half of its training data is in only one language, how truly 'global' can its educational impact be?

Imagine an AI chatbot designed by the best tech companies to teach, but it accidentally makes stereotypes worse or gives inaccurate feedback to a student. This issue is more pervasive than we think and is related to cultural bias in the fast-growing world of AI. There are over 200 countries in the world and we collectively speak over 7000 languages. But a majority of the AI models that we are using, from Google, OpenAI and Anthropic are all generated in just one country, the US, with half of its training data in only one language, English. And these provide information and generate billions of responses to people all over the world.

So I ask: Can a model trained in the US create culturally relevant educational material for secondary students in rural India?

The answer: Not to the same quality it can for materials aimed at students in Urban USA.

The reason: bias on multiple levels.

And unless we pay attention to it, the situation isn’t going to get better. First we need to understand the issue in detail…

- Details

- Read Time: 10 mins

Read more: Gen AI’s Culture Bias Problem: What You Need to Know!

If you’re a person of colour, then you know that representation in tech has always been limited, not just in the workforce but in the actual tech itself. Resulting issues in tech are many, with examples being white balance in cameras, heart rate monitors in some early smart watches, and some facial recognition systems not working for those with darker skin tones. Technology intended for use by everyone, but where designs marginalise certain parts of society, or only consider certain groups, are problematic. Although there have been gains in rectifying issues in certain products, in tech, there is still a long way to go.

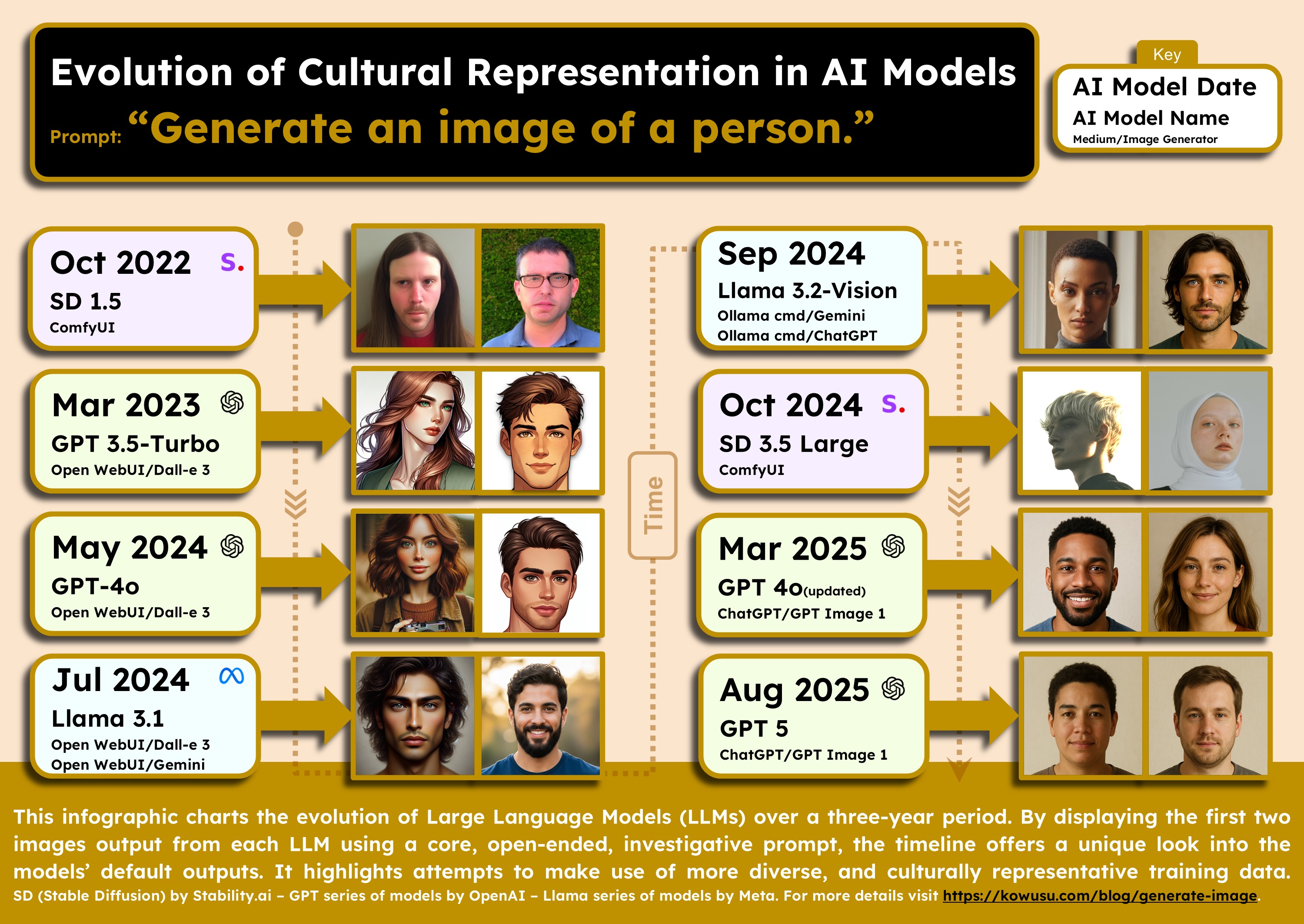

Is generative AI going to replicate these same issues on a large scale, or is this now an opportunity to fix it? I took a bit of a deep dive to find out what is happening, and my conclusion? Things are getting better, but we still have a long way to go! I decided to investigate the training of generative AI models by using a simple prompt ‘Generate an image of a person’. This simple, vague, open ended question allowed me to probe the underlying training of each model I tested. Without a specific instruction, the model itself had to come up with its own default. From that prompt, I took the first two image results from 8 different AI models, with release dates that spanned over the last 3 years. The results of how AI models have progressed are really interesting! Let’s take a look.

- Details

- Read Time: 13 mins

Read more: Evolution of Cultural Representation in AI Models

Intro

It is nearly a year ago now, since I laid down my hard earned cash to purchase the Playdate by Panic. The small, yellow, low powered, handheld device with a monochrome screen and a strange crank, initially, seemed like nothing to write home about. It is so niche that if it hadn’t been designed with Teenage Engineering, a company whose products I love (but usually can’t afford), I would never have heard of it. Compared to other consoles by the likes of Nintendo, Sony or Microsoft, the specs are definitely lackluster. But I still love it because it was not designed to rival those powerhouses. It was designed to emulate the essence of gaming, like the Game Boy did back in the 1980s, and with its fun and quirky games, it does that brilliantly. And with their recent focus on education, I think they really have something to offer. Let's talk about it...

- Details

- Read Time: 6 mins

Exploring what generative AI really is, what it isn’t, and what that means for teachers and learners.

Before generative

Let’s step back for a moment and remind ourselves that AI has been used in education for a while already. Classification and recognition AI was central to Google’s Quick, Draw! Game (2016); Reinforcement Learning systems were demonstrated using Minecraft education edition through project Malmo (2016); Lego Spike Prime (2020) could be used alongside AI algorithms to understand sensor information and inform actions. When it comes to teacher use for productivity, predictive/analytical AI has been used in Knewton Alta, (2018) an adaptive learning platform that predicts student performance and recommends practice based on data; and ALEKS (McGraw Hill) delivers personalized practice based on student knowledge and performance (1999). Many now see generative AI as the next step up, with extra power, control and generative properties. However is that really the case?

Generative AI and the Illusion of Understanding

Generative AI does have a kind of magic to it, which is different from the other forms. Its responses are so human-like many have already been fooled into thinking it is human. But when looking through research papers and developer notes, and coming across its shortcomings, the truth is, it cannot really understand, at least not in the way that humans can.

- Details

- Read Time: 8 mins

AI in the Movies hasn't been all roses

It has been about 3 years since OpenAI thrust us into the era of mass adoption of Artificial Intelligence. Since then, the world has undergone a paradigm shift, for better or for worse. But let’s not forget that AI was around long before this and the term was initially coined over 70 years ago. AI is nothing new and our opinions of it have been forming over the last few decades. Unfortunately, many media and entertainment depictions of AI have garnered negative views, based on movies like '2001: A Space Odyssey' (1968); 'Blade Runner' (the 1982 one), which was based on the Philip K. Dick short novel 'Do Androids Dream of Electric Sheep' (1968); the Terminator movies (from 1984); 'I, Robot' - where the robot was actually good, but for most of the movie, the viewer was led to believe otherwise; 'The Matrix' (1999); 'Ex-Machina' (2014); and 'M3GAN' (2022) the only one in this list that I have not actually seen.

So what does this all mean for edtech, which is the purpose of this post? It means that those negative sentiments have now drifted into the minds of teachers, educators and parents. But do we really have anything to fear? Well, yes and no. Let me start with my less important (for this article) take on AI...

- Details

- Read Time: 7 mins

Read more: To AI or not to AI? - That Is No Longer The Edtech Question!